Artificial Intelligence: Nature inspired networks

Benito Jerónimo Feijoo, a spanish science popularizer, who lived between the XV and XVI century, once said “there is not any knowledge among men, which is not forthcomingly or immediately deduced by experience”, reality and nature are the objects and models which science studies. Nowadays, there is also Artificial Intelligence (A.I), an area of computer science where machines have the ability to think and mimic other “cognitive” functions of humans. Esteban Palomo, Ph.D, faculty member at the School of Mathematical Sciences and Information Technology at Yachay Tech, works in the improvement of artificial self-organized neural networks, or the networks which allow the clustering of data. Similar like our own brain, these networks store and group data by following patterns and, at the same time, promote a competition among its “neurons”. The overall goal of Esteban’s research is to create improvements to the neural models which already exist.

A.I is divided in two great research fields: The Symbolic field, dedicated to the development of more classical mathematical models, and the Subsymbolic or Bioinspired field, dedicated to the development of intelligent systems inspired by nature with the objective of analyzing real data and scavern patterns.

In this case, Artificial Neural Networks, a study of the subsymbolic field, are characterized by their apprenticeship capacity. Some require supervision on that apprenticeship, with the introduction of previously tagged data. For example, Supervised Neural Networks can receive data about people who suffer from cancer, and people who don’t. When introducing data about new people, the network can predict if they will suffer from cancer or not based on the previously introduced data.

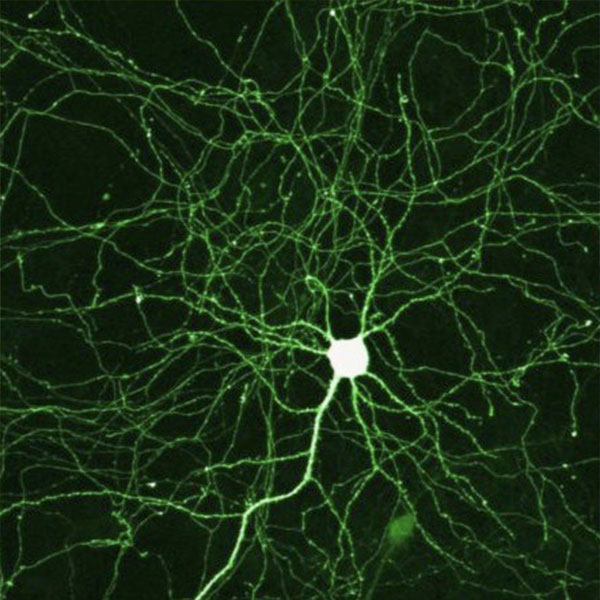

On the contrary, there are also Not Supervised Neural Networks. These selforganized networks, pursue the classification and clustering of data. When an excessively wide quantity of data has to be analyzed, the assignment of a Selforganized Neural Network is to scavenge for patterns of that data, clustering the information and constructing something which looks like a net. Imagine that on this “net” every “neuron” represents a data cluster. Commonly known as Data Mining, this process has the objective of gathering information, patterns, relations and relevant structures from data.

This type of network has become a popular research subject of research due to various reasons. The first one is that, nowadays, we produce a quantity of information that is humanly impossible to tag or classify. For example, it is impossible to classify the excessive amount of web pages that exist now, so a Not Supervised Neural Network can scavenge, for example, web pages that relate gastronomy related topics, later clustering them according to different indicators using the closest “neuron”. The other reason is that this process has a huge amount of industrial and commercial applications. For example, it can give useful information about what certain publics buy on the internet in order to have a better focalization of the audience of a product or service.

This is how selforganized neural networks create connections and self-adapt to the distribution of the entrance data, without any conduct. On most of the occasions, this data has multiple dimensions. This means that every piece of data contains many characteristics, which make the process even more complex. Luckily, through research and science, we now have more clarity on how to continue to improve these systems.

The first, and most important, selforganized network was the Selforganized Map, created by Teuvo Kohonen: a not supervised network with the purpose of promoting competition and self-clustering among neurons to better represent information. However, this map presented some problems, for example, in order to form the right network, or the predictions regarding the amount of data clusters which had to be made, or the fact that the exact number of neurons needed to classify the information had to be specified to start the network trainment. Also, another problem, was the map needed to classify the networks, as it was a steady topology, commonly a grid.

Later on, an improved version was born: the Growing Neural Gas or commonly known as GNG. This network surpasses the limitation problems of the Selforganized map through the the automatic growth of the network. By having a flexible topology, based on graphs, the GNG can adapt itself to every form. But this network can also be susceptible to improvement. This is why Esteban Palomo, along with Ezequiel López Rubio from Malaga University, created the Growing Neural Forest or GNF,, a model that improved the GNG. Furthermore, GNF creates an aggregate of GNGs, which have been transformed from graphs to trees. Therefore, the flexibility and its adaptability to entry data increased: It gives the network more capacity of data selection and recognition.

But the GNF is not the only improved version of GNG created by this team. The Growing Neural Hierarchical Gas or GNHG consists on a tree of GNG’s with an improved algorithm. This new network allows the hierarchical classification of data. For example, instead of just clustering all gastronomy web pages, it has the capacity of subdividing them on minor clusters. For example, author cuisine, country’s cuisine and fusion cuisine; and then even minor clusters, like italian cuisine, spanish cuisine, french cuisine, among others, on the “country’s cuisine” cluster. This two improvements imply a lot in practical terms, given that they provide new forms of clustering data on different, and more detailed, levels.

Esteban will continue to work on his research at Yachay Tech hoping to quickly apply his knowledge of data mining and neural networks here in Ecuador.